The core argument

95% of enterprise AI pilots fail to deliver measurable financial returns. The common diagnosis lands on data quality, governance, ownership. Those are symptoms. The disease: organizations ask "how do we use AI?" instead of "how do we redesign the work?" The same reason dishwashers don't have arms.

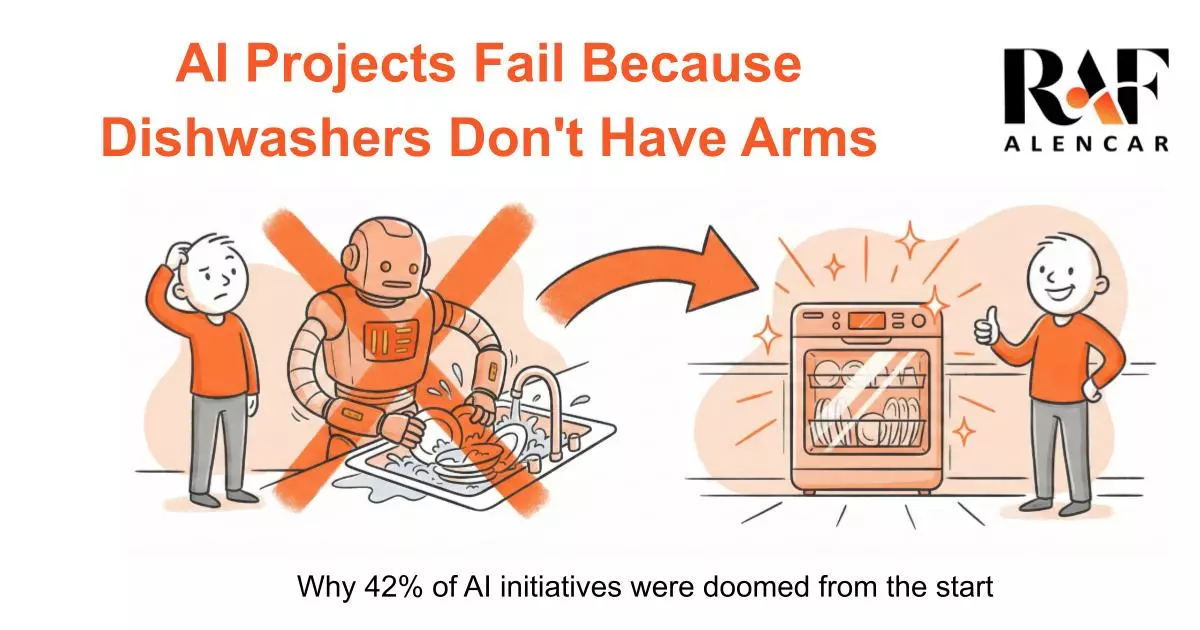

The Humanoid Dishwasher Problem

Imagine solving dirty dishes at scale by building a humanoid robot that stands at your sink, picks up each dish, scrubs it, rinses it, places it in the drying rack. Same process. Different actor. That's absurd. Because that's not what we built.

We built dishwashers — machines that look nothing like humans, operate nothing like humans, and redesigned the entire task of cleaning dishes from first principles. Less water. More energy efficient. More thorough and consistent than humans ever could be. We didn't replace the human. We redesigned the work.

This Pattern Repeats Across Every Major Technological Shift.

| Technology | Wrong Approach | What Actually Worked |

|---|---|---|

| Steam Engine | Ox-shaped machine pulling the same plow | Redesigned agriculture entirely |

| Automobile | Robot driver operating a horse carriage | Redesigned transportation entirely |

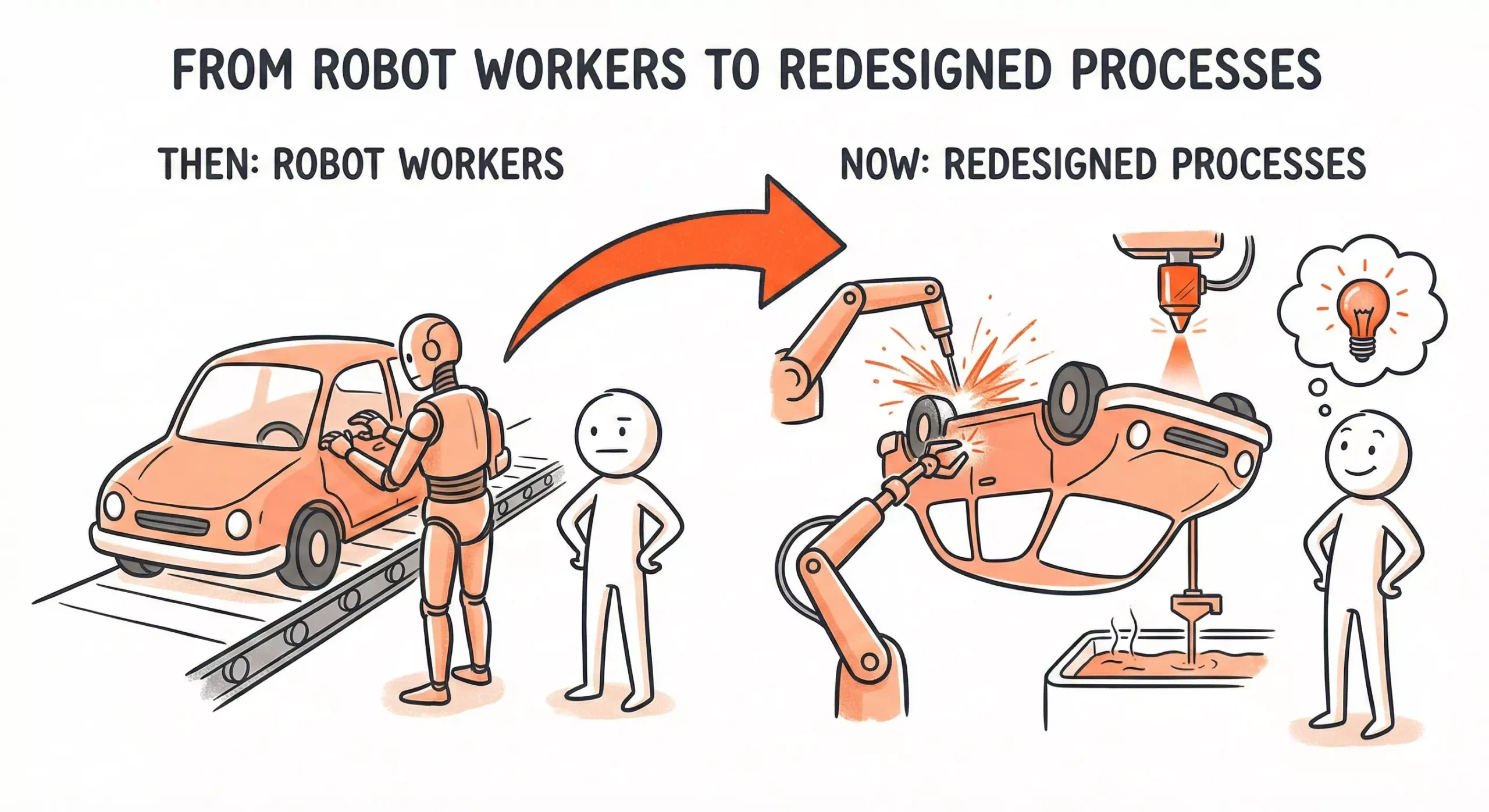

| Car Manufacturing | Robots mimicking human assembly workers | 3D printing, flipping cars upside down, precision welding, chemical vat immersion |

| AI in Business | Chatbot answering the same questions humans answered | Still waiting for most organizations to figure this out |

The Lindy Trap.

Skeuomorphism — preserving the interface of the old tool because it feels familiar, even when the engine underneath has completely changed. It's paving the cow path.

The Lindy Effect of bad design: the longer a flawed structure survives, the more legitimate it feels — even when the environment that produced it no longer exists. Ox-powered farming worked for millennia. Horse-drawn transport worked for centuries. Longevity feels like proof of fitness. Survival in the old environment doesn't mean optimality in the new one.

Real transformation in car manufacturing came when manufacturers stopped asking "how do we replace the human at this station?" and started asking "how would we build a car if humans weren't a constraint?" The answer: modular assembly, flipping entire car bodies upside down, dunking frames into chemical vats, 3D printing components that couldn't be manufactured any other way. Processes that were literally impossible for humans to perform. The car didn't succeed because it replaced the horse. It succeeded because it replaced the carriage.

The Hotline Problem.

Most AI implementations follow this pattern:

Before: Human does Task A → Task B → Task C → Decision

After: Human does Task A → asks AI chatbot about Task B → Task C → Decision

That's not transformation. That's giving someone a hotline. Hotlines still require someone to remember it exists, know what to ask, interpret the response, decide what to do with it. The process is identical. You just added a resource.

Leaders keep choosing this path because it's safer. Adding a chatbot preserves reporting lines, accountability structures, and political capital. Nobody's job changes. You can claim "AI adoption" without actually redesigning anything. New wine in old wineskins — and the wineskins feel proven.

The Conversations Nobody Wants to Have.

When AI stalls, the blame lands on regulation, the models, or "our data isn't ready." Safe targets. Nobody gets fired for bad data.

But research shows these explanations let everyone off the hook for the actual problem: the conversations nobody wants to have. Should we build this ourselves or partner? Who decides what happens to the data? Who takes the blame if it fails? These aren't technical problems. They're leadership problems disguised as technical ones.

What Actually Works.

The organizations getting transformational results aren't doing it by adding tools. They're doing it by asking a different question first: if this process didn't exist and I was designing it from scratch today, knowing what AI can do — what would I build?

That question produces process redesign. And process redesign is the precondition for AI that actually changes outcomes rather than just adding a resource to the existing dysfunction.