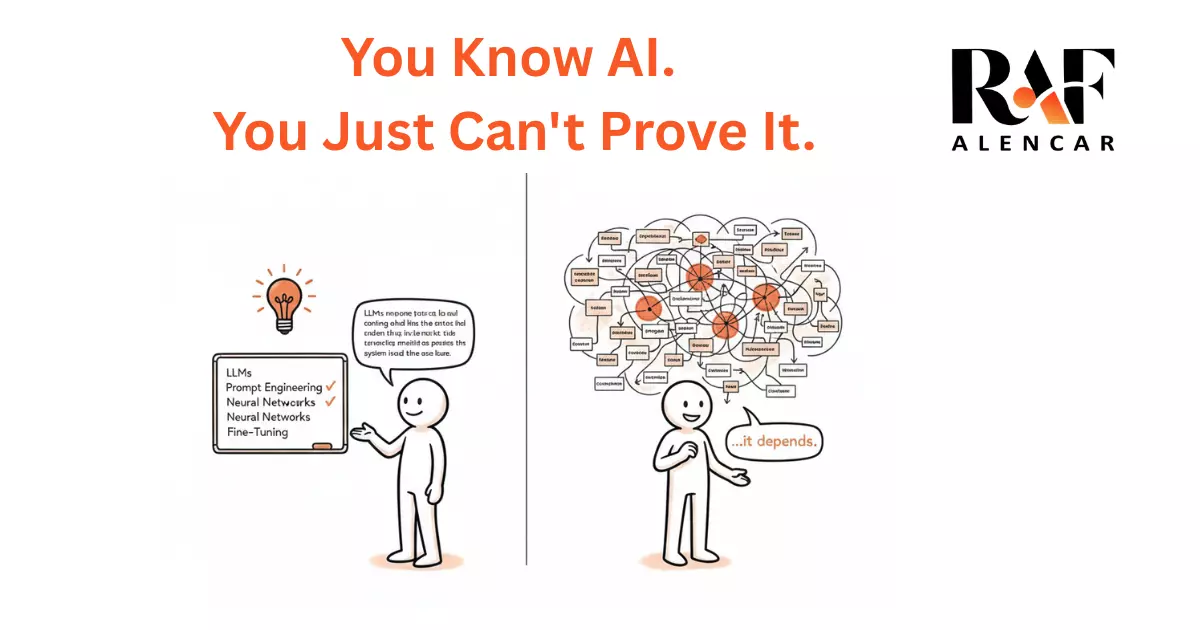

The core argument

The people with the deepest capability are often the worst at signaling it. The market rewards legible credentials. And right now, "AI literacy" is being defined by the people handing out the certificates — not the people doing the work.

In 1999, I was a Canadian teenager living in Brazil.

English was my first language. I'd grown up reading in it, thinking in it, dreaming in it. I'd been building things on computers since I could reach the keyboard — game mods, a chatbot in BASIC, an HTML site back when that meant something, a BBS I ran with my friends.

And yet, when I looked around at my peers in Brazil, they all had something I didn't: a certificate that said they knew English. From a local ESL school. Twenty hours of instruction. A piece of paper.

Their English was functional at best. Mine was native. But in the context that mattered — a resume, a job application, a first impression — their capability was visible and mine wasn't. I didn't know how to prove I spoke English. I just... spoke it.

The Certificate Economy.

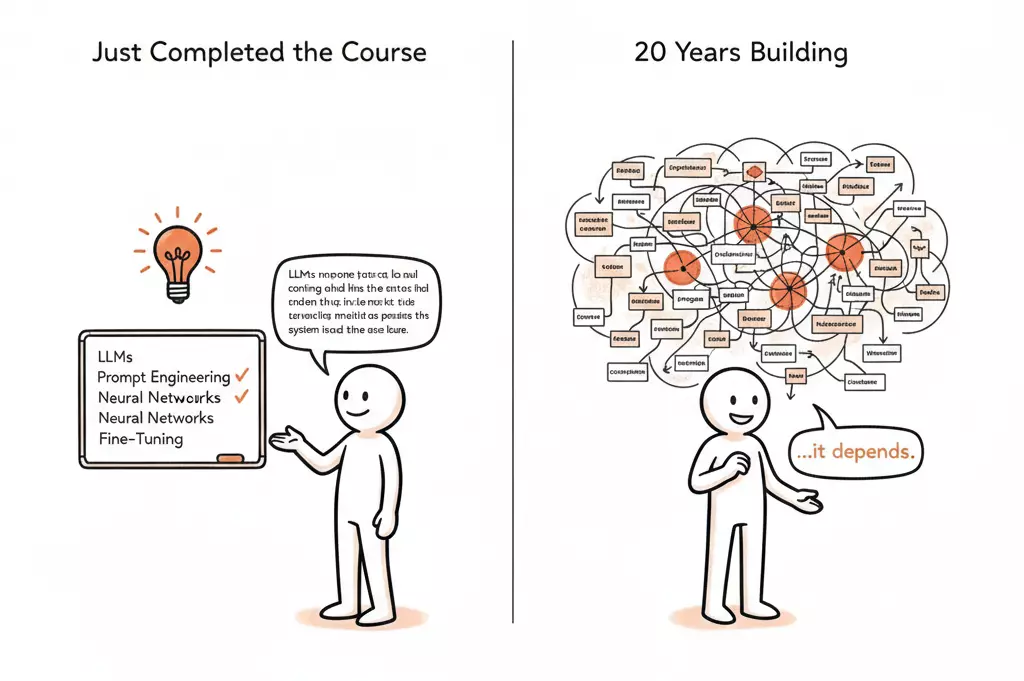

This pattern should feel familiar to anyone who's been in technology long enough.

In the early 2000s, knowing "how to use Word" was a resume line. People took courses for it. Got certificates. Meanwhile, the people who had been using computers since DOS didn't list anything — because what would they even write?

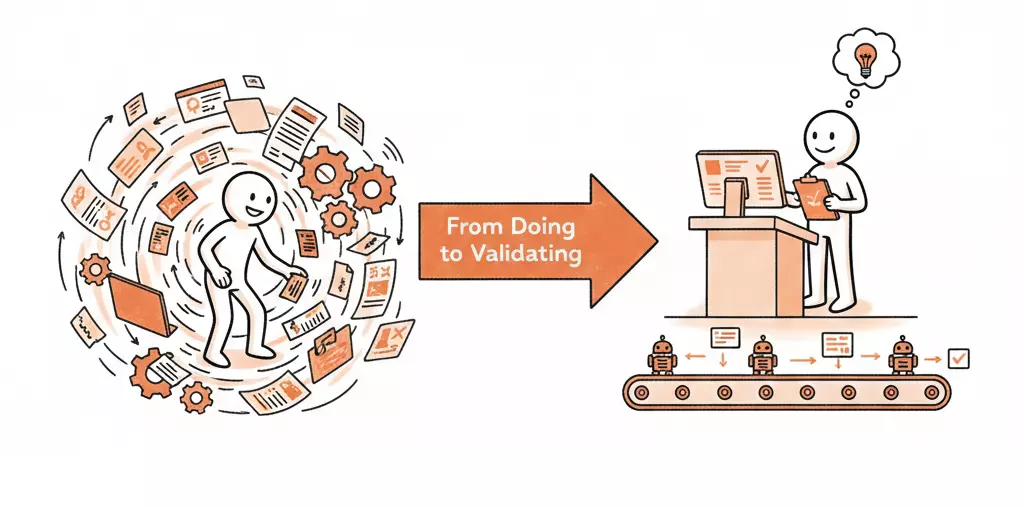

Every time a new capability becomes important, the same cycle plays out:

- A real skill emerges from practitioners doing the work.

- The market notices it matters.

- A credentialing industry forms around it.

- The credential becomes the proxy for the skill.

- The people who had the skill first are suddenly behind — because they never needed the credential.

We're at step 5 with AI.

What "Knowing AI" Actually Looks Like.

I've spent over twenty years building things with data and technology. Regression models. Analytics frameworks. System architectures. Production applications with LLM API calls. Prompt engineering pipelines. Agentic workflows — scraping, data enhancement, ETL, analysis, summarization. Full system designs from data layer to output.

None of that comes with a badge.

You know what does come with a badge? A free four-hour course on Coursera.

"Include AI on your LinkedIn profile" is the new "include Microsoft Office on your resume." It sounds like advice. It's actually a symptom of a market that doesn't yet know how to evaluate what it's asking for.

The Legibility Problem.

When a hiring manager, a potential client, or a LinkedIn algorithm encounters your profile, they're pattern-matching. They're scanning for signals. And the signals they've been trained to recognize are credentials, keywords, and badges — not demonstrated capability.

If you've spent years building with data and AI, your actual work is probably:

- Embedded in proprietary systems you can't show publicly

- Described in terms that don't match trending keywords

- Spread across so many domains that it doesn't fit neatly into "AI experience"

- So integrated into how you think that you don't even recognize it as a distinct skill

That last one is the real killer. When something is native to you, you forget it's a skill.

What You Can Actually Do About It.

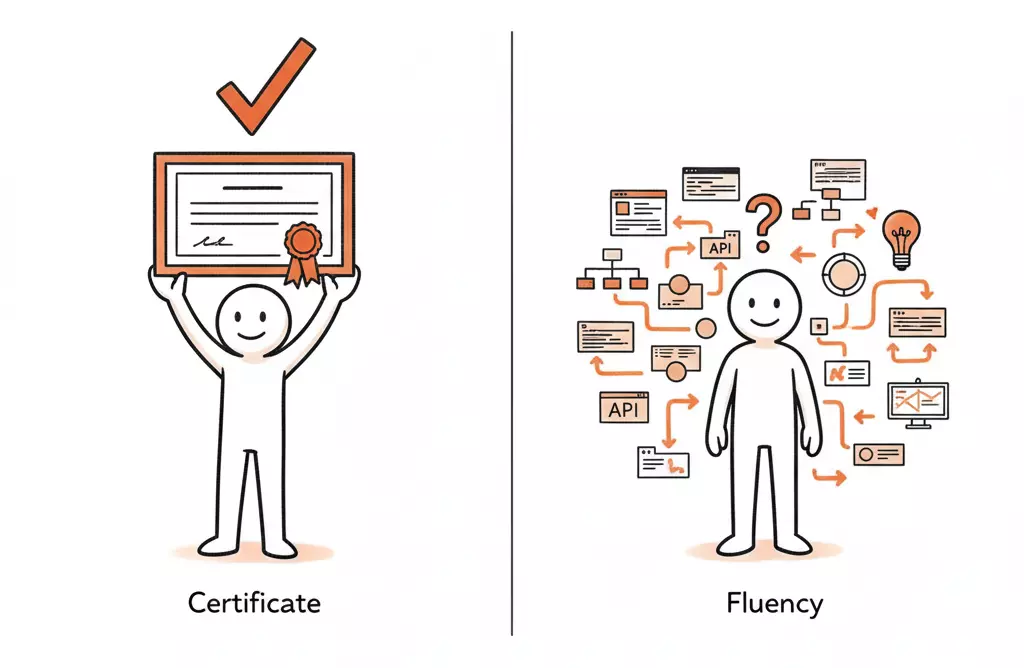

The credentialing system is broken for experienced operators. That doesn't mean you can't create legibility — it just means you have to create it differently.

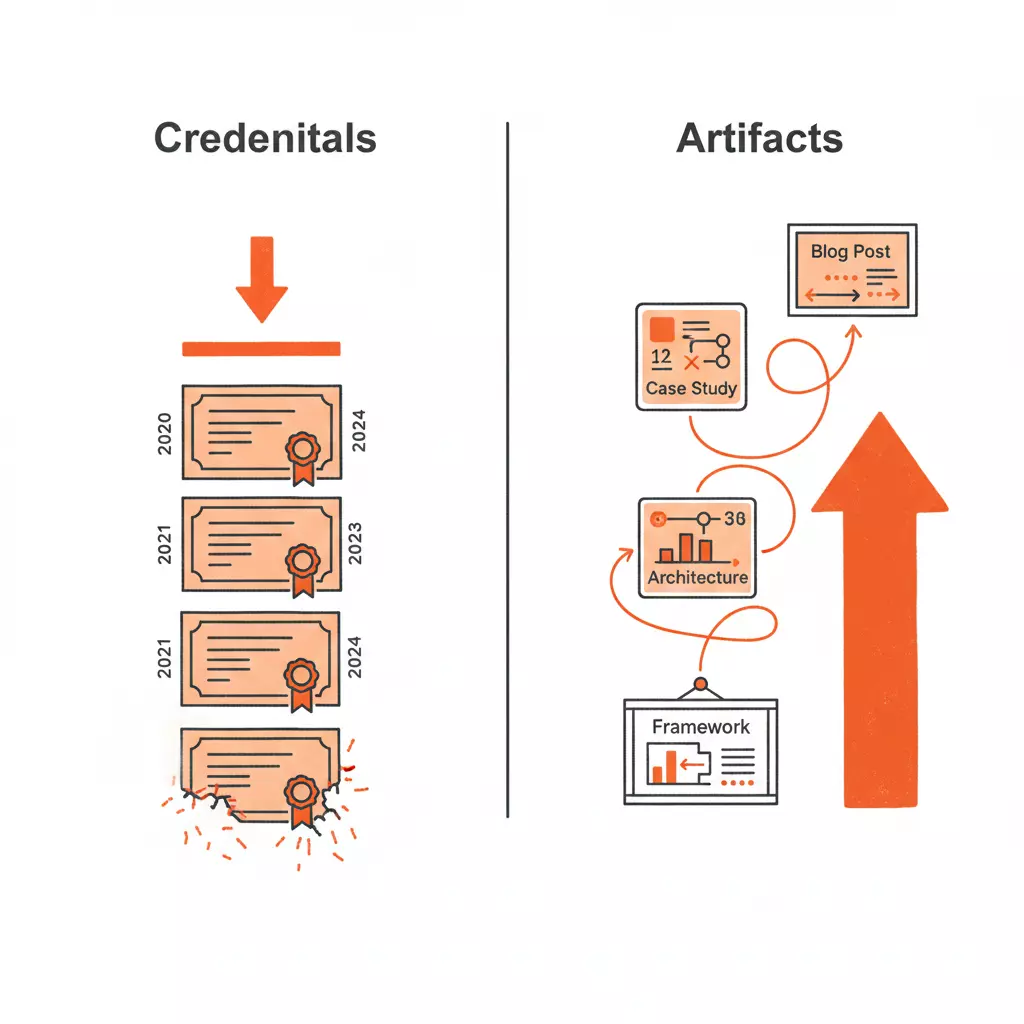

The people winning this problem aren't the ones collecting more certificates. They're the ones who have made their work visible — who have built things publicly, written about what they've learned, documented the problems they've solved in ways a stranger can understand.

The credential says you took the course. The artifact says you built the thing.

Build the thing. Make it public. Name what you learned.

That's not a marketing strategy. That's the only way the market learns to recognize capability it can't credential.